Agentic AI

,

Artificial Intelligence & Machine Learning

,

Next-Generation Technologies & Secure Development

Company Says Supply-Chain Risk Label Threatens Billions in Contracts

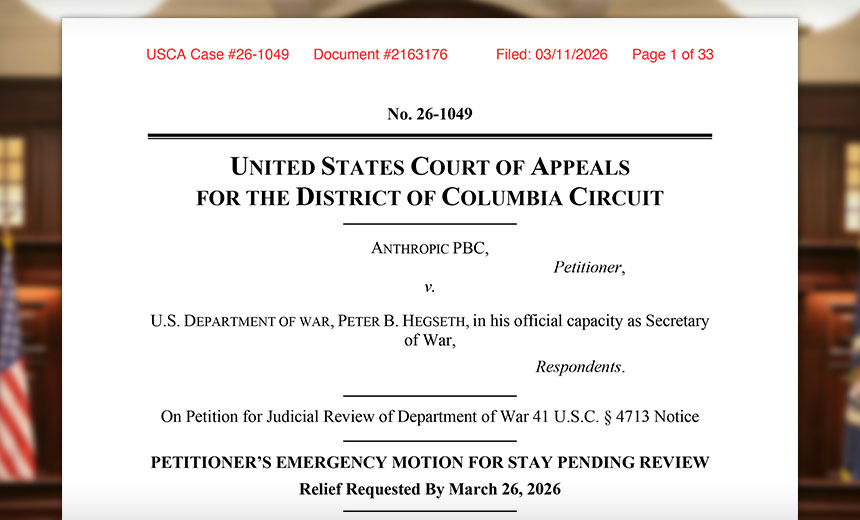

Anthropic late Wednesday asked the U.S. Court of Appeals to temporarily block a Department of War decision that designated the company as a supply-chain risk.

See Also: How Unstructured Data Chaos Undermines AI Success

The San Francisco-based company said the designation effectively bars defense contractors from using Anthropic’s Claude AI models and could trigger government-wide blacklisting from federal procurement. Anthropic argued the U.S. government’s label is unlawful, retaliatory and procedurally defective, and it asked the Court of Appeals to pause the government’s action while the case is reviewed.

“This case involves extraordinary assertions of executive power. It began not with a reasoned agency decision but with a social media post by Secretary Hegseth designating Anthropic – an American company and leading developer of frontier artificial-intelligence systems – a ‘Supply-Chain Risk,'” Anthropic wrote in a 238-page filing submitted at 11:15 p.m. on Wednesday.

The company alleged in a 48-page lawsuit Monday that the government punished Anthropic economically after the company argued its AI shouldn’t be used for autonomous lethal warfare or mass surveillance of Americans.

Anthropic’s Court of Appeals complaint was filed the same day the company initiated litigation in the Northern District of California accusing the U.S. government of behaving unconstitutionally by retaliating against the AI developer for speech and viewpoints about AI safety. The government’s ban on Anthropic from federal government use and contracting violates constitutional and statutory law, the firm argues.

The designation could effectively bar the company’s Claude AI models from use by military contractors, threatening hundreds of millions or potentially billions of dollars in revenue. The Department of War declined an Information Security Media Group request for comment on Anthropic’s lawsuit, while Anthropic didn’t respond to a request for additional comment (see: Anthropic Sues After US Government Cuts Off AI Contracts).

Anthropic: Supply Chain Risk Designation Process Not Followed

Designating a contractor a supply-chain risk normally has risk assessments, internal recommendations and procedural safeguards before any determination is made, according to Anthropic. But in this case, Anthropic said the government issued a public denunciation first and then attempted to retroactively justify it through a statutory notice issued days later, making it a politically motivated reaction.

“The post purports to order any entity doing business with the Department [of War] to immediately cease working with Anthropic,” Anthropic wrote in the complaint. “At the same time, the Secretary directed Anthropic to continue providing its AI tools to the Department for up to six months.”

Congress created strict limits when it gave agencies the authority to remove companies from federal supply chains. The rules focus on the infiltration of government technology supply chains by hostile foreign actors or compromised vendors, Anthropic said. Instead of documenting a risk assessment and providing notice, the secretary allegedly declared the designation immediately and only later issued a brief notice.

“The Secretary may invoke § 4713 authorities ‘only after:’ (1) obtaining a recommendation, including a risk assessment, identifying a ‘significant supply chain risk’ in a ‘covered procurement;’ (2) providing ‘information that forms the basis for the recommendation’ to a targeted ‘source;’ (3) affording the source 30 days to respond,” Anthropic wrote in the complaint.

Section 4713 is designed to address risks that technology suppliers might sabotage government systems, introduce malicious functionality, enable foreign espionage, or manipulate critical infrastructure. In contrast, Anthropic said it has worked extensively with the U.S. government, including defense agencies, and its AI models have undergone extensive vetting and obtained security certifications.

“Since as early as 2024, Anthropic has led the field in supporting U.S. national security priorities,” said Anthropic co-founder Jared Kaplan. “These include collaboration with federal partners on AI safety research, evaluation frameworks and strategic cloud partnerships as AI assumed a more prominent national security role. As a result, Anthropic’s AI models were the first ever to be used by American warfighters on classified systems.”

Anthropic: Supply Chain Risk Label Already Disrupting Business

The government’s supply-chain risk designation damages Anthropic’s reputation, and Anthropic said due process protections apply when the government stigmatizes a private company and attaches legal consequences to that label. Before imposing such consequences, the U.S. Constitution requires at least notice of the evidence against the company and a meaningful opportunity to rebut it, Anthropic said.

“Both the Supreme Court and this court have recognized that the right to know the factual basis for the action and the opportunity to rebut the evidence supporting that action are essential components of due process,” Anthropic wrote in the complaint. “That essential component of due process was not satisfied here.”

The U.S. government’s designation of Anthropic as a supply-chain risk is already disrupting its business relationships, with customers questioning whether they can continue working with Anthropic and major contracts being cancelled. Because the federal government generally enjoys sovereign immunity from damages, Anthropic argues that these losses can’t be recovered later, making the harm irreparable.

“Anthropic’s likelihood of success on its constitutional claims establishes irreparable injury,” Anthropic wrote in the complaint. “The loss of constitutional freedoms unquestionably constitutes irreparable injury. The government’s violation of Fifth Amendment due process rights is irreparable harm, as is its ongoing retaliation in response to the exercise of First Amendment rights.”

Anthropic said the government will not be harmed by a temporary stay since the Department of War itself has required Anthropic to continue providing its AI systems for several months during the transition period. If the system truly posed a national-security risk, Anthropic said, the government would not continue using it.

“The government has identified no legitimate, non-retaliatory interest in a supply-chain designation,” Anthropic wrote in the complaint. “Staying the secretary’s actions would thus inflict no harm, but would merely preserve the pre-Feb. 27 status quo. A stay also would not disrupt the Department’s intent to continue using Claude for up to six months for military or other national-security operations.”